I am a Lecturer in Graphics and Vision at Birmingham City University, UK, working in the Graphics and Vision Research Group at Birmingham City University.

Research Interests

Real-time Computer Graphics, Perception and Displays

Advances in display technology are allowing increasingly high-fidelity displays to be produced. As pixel density increases, however, it becomes increasingly challenging to render content at full resolution in real time. Additionally, the bandwidth required to stream content to these displays becomes problematic. Given access to eye tracking, how can we exploit this information to reduce bandwidth and computational requirements without sacrificing perceived quality? How should our knowledge of human perception influence the way we design displays?

Computer Graphics for Mixed Reality

Mixed reality creates new challenges when producing high quality real-time graphics. The rendered virtual content needs to not only appear realistic, but also consistent with the existing real content. One challenge is how to effectively estimate the real lighting environment, and how to use the estimate to render the virtual content. Another is that of accurately determining where virtual content is obscured by real content, and should not be rendered.

Publications

2025

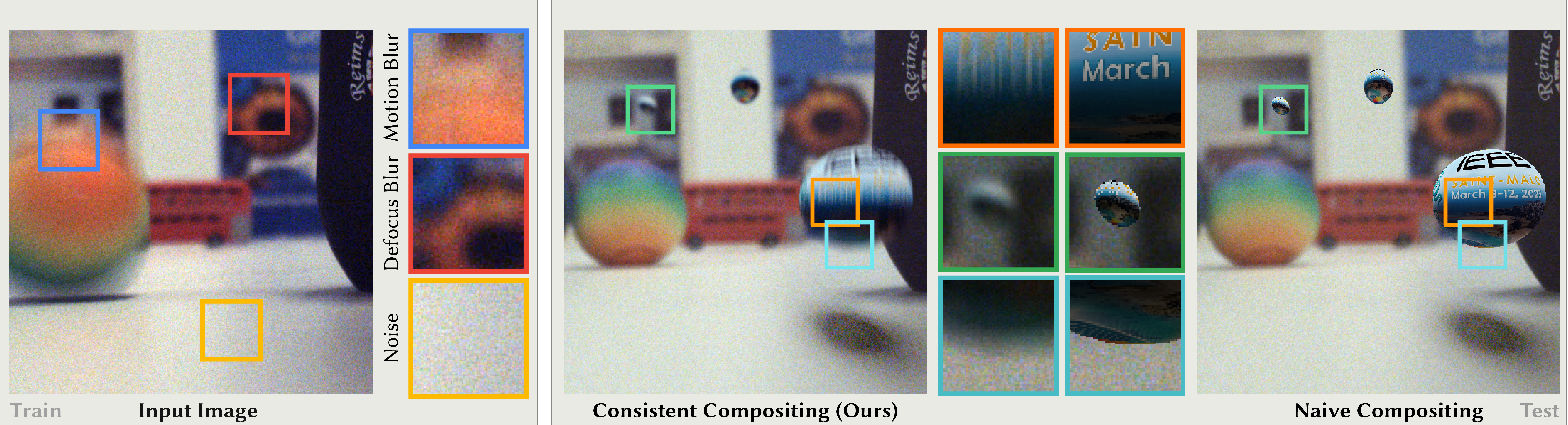

Blind Augmentation: Calibration-free Camera Distortion Model Estimation for Real-time Mixed-reality Consistency

Blind Augmentation: Calibration-free Camera Distortion Model Estimation for Real-time Mixed-reality Consistency

Siddhant Prakash, David R. Walton, Rafael K. dos Anjos, Anthony Steed and Tobias Ritschel

To appear in TVCG 2025 (will be presented at IEEEVR 2025): [Preprint] [Webpage]

This paper applies the principles from Beyond Blur to real-time image inpainting, with applications in warping rendered RGBD images to improve framerate, or to fill in missing details in inputs such as 360 video. Missing regions of the image are filled based on image statistics gathered around the disoccluded regions.

2023

Metameric Inpainting for Image Warping

Metameric Inpainting for Image Warping

Rafael Kuffner dos Anjos, David R. Walton, Kaan Aksit, Sebastian Friston, David Swapp, Anthony Steed and Tobias Ritschel

TVCG 2023: [Paper] [Video] [Webpage]

This paper applies the principles from Beyond Blur to real-time image inpainting, with applications in warping rendered RGBD images to improve framerate, or to fill in missing details in inputs such as 360 video. Missing regions of the image are filled based on image statistics gathered around the disoccluded regions.

2022

Beyond Flicker, Beyond Blur: View-coherent Metameric Light Fields for Foveated Display

Beyond Flicker, Beyond Blur: View-coherent Metameric Light Fields for Foveated Display

Prithvi Kohli, David R. Walton, Rafael Kuffner dos Anjos, Anthony Steed and Tobias Ritschel

Poster presented at IEEEVR 2022: [Page] [Poster] [Short Paper] [Teaser Video]

This work extends metamer generation to 3D light fields. These present some unique challenges when trying to create metamers with coherent, convincing motion in the periphery. We show how these light field metamers can be generated, and explore how they can be efficiently compressed.

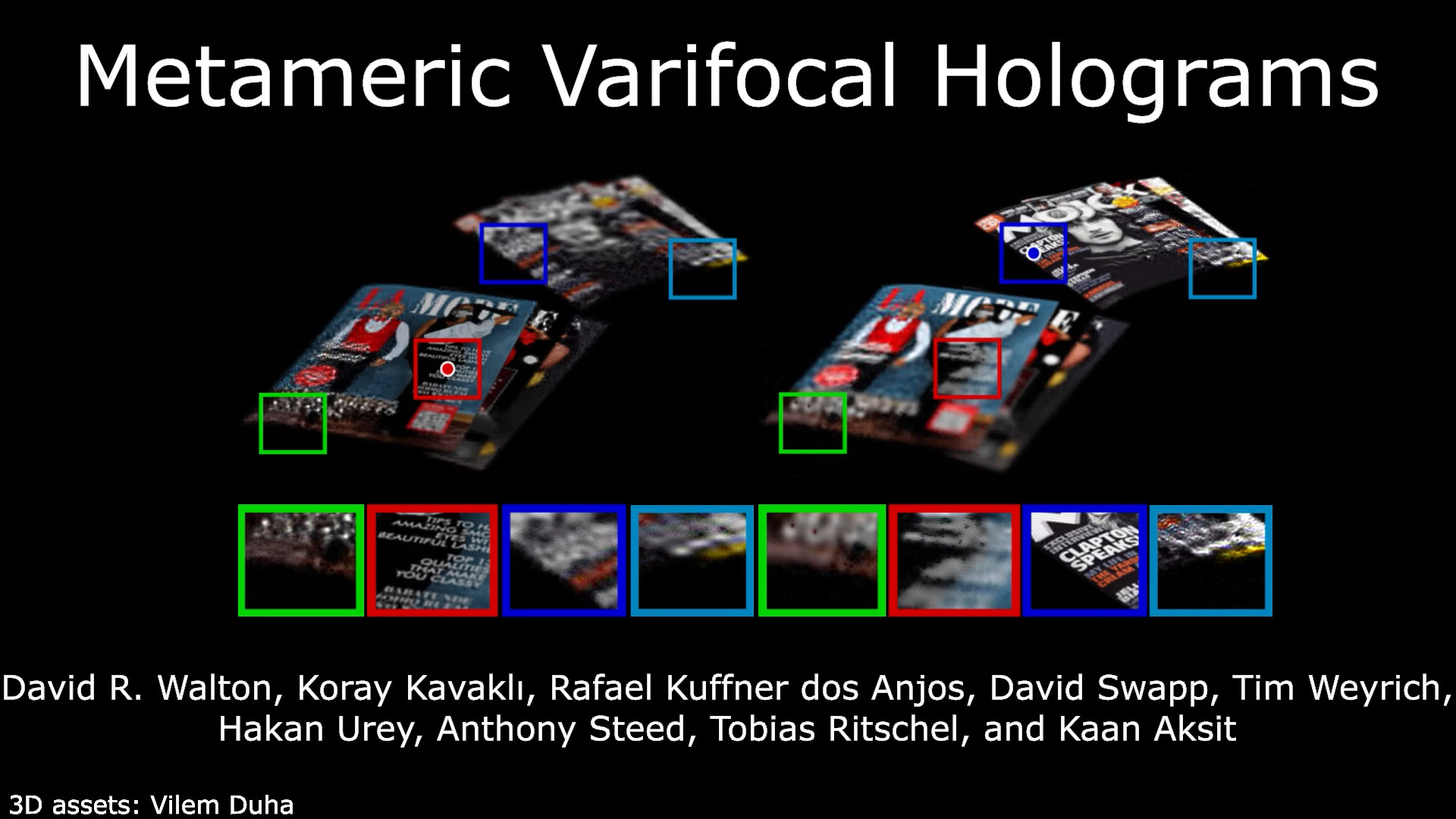

David R. Walton, Koray Kavakli, Rafael Kuffner dos Anjos, David Swapp, Tim Weyrich, Hakan Urey, Anthony Steed, Tobias Ritschel and Kaan Aksit

IEEEVR 2022: [PDF(ArXiv)] [Page] [Video] [Supplementary Material] [Code] [Library]

This work focuses on improving computer generated holograms where gaze information is known. It exploits gaze in two ways. First, by using a metameric loss based on our earlier Beyond Blur paper which only penalises perceivable content in the hologram. Second, the holograms are optimised to be correct only at the user’s current focal plane. We test our results on a real holographic display prototype.

2021

Beyond Blur: Real-time Metamers for Foveated Rendering

Beyond Blur: Real-time Metamers for Foveated Rendering

D Walton, R Kuffner-dos Anjos, S Friston, D Swapp, A Steed, T Ritschel

ACM Trans Graph (Proc. SIGGRAPH 2021) 40(3): [PDF] [Webpage] [Unity Package] [Windows Demo] [Python Example]

This work introduces a new method for foveated rendering using ventral image metamers. These are alternative versions of images which are indistinguishable from the original for a given fixation point. We introduce a method to extract a model of the perceivable components of an image for a given fixation point, and a method to convert this model to a metamer of the input. Both methods are fast, and the model is compact, allowing metamers to be used for the first time in real-time compression and rendering applications.

2019

Improved Real-time Rendering for Mixed Reality (EngD Thesis)

Improved Real-time Rendering for Mixed Reality (EngD Thesis)

David R. Walton [PDF]

My EngD project focused on real-time techniques for enhancing graphics in MR applications. I focused on how to interpret and exploit data from sensors such as RGBD and fisheye cameras to capture detailed information about the real world, and render more realistic and consistent MR scenes.

2018

Dynamic HDR Environment Capture for Mixed Reality

Dynamic HDR Environment Capture for Mixed Reality

David R. Walton and Anthony Steed

VRST 2018: [PDF] [Bibtex] [Video]

This paper built on the earlier work in Synthesis of Environment Maps for Mixed Reality below. We present new techinques allowing the estimation of full HDR environment maps, and allowing us to respond much more quickly to changes in the real 3D environment. These improvements do not require any additional sensing hardware. We demonstrate a full AR application capable of working in real time.

2017

Accurate Real-time Occlusion for Mixed Reality

Accurate Real-time Occlusion for Mixed Reality

David R. Walton and Anthony Steed

VRST 2017: [PDF] [Bibtex] [Video] [Code]

In MR applications, correctly handling occlusion of virtual objects by real ones is critical to maintaining a good user experience, but this remains a significant challenge. Consumer depth sensors can be used for this purpose, but the depth maps they provide are noisy, incomplete and often unreliable. This paper presents a technique for using these depth maps to estimate a high-quality occlusion matte. We also develop a technique for quantitatively comparing the quality of AR occlusion handling methods, and use it to assess our approach and others.

Synthesis of Environment Maps for Mixed Reality

Synthesis of Environment Maps for Mixed Reality

David R. Walton, Diego Thomas, Anthony Steed and Akihiro Sugimoto

High-quality estimation of surrounding lighting is important for rendering realistic virtual objects in AR. Particularly when rendering specular, mirror-like virtual materials, high-frequency environment lighting is required. This paper presents techniques for estimating a 360 degree environment map around a virtual object, constructed using data from a two-camera system consisting of a depth camera and a fisheye camera. We show how these sensors can be used in a real-time system that tracks the motion of the cameras and updates a 3D scene model in real time, using this to estimate environment maps and render realistic 3D objects.

Patents

Augmented Reality Occlusion

David R. Walton, Imagination Technologies Ltd. [Google Patents]

Analysing Objects in a Set of Frames

Aria Ahmadi, David R. Walton, Cagatay Dikici, Imagination Technologies Ltd. [Google Patents]

Career

Lecturer, BCU 2023-Present

I currently work as Lecturer in Graphics and Vision in the Graphics and Vision Research Group at Birmingham City University.

Research Associate, UCL 2020-2023

From 2020-2023 I worked as a research fellow in the Virtual Environments and Computer Graphics group at UCL, working on novel graphics techniques, displays and perceptual graphics.

Research Engineer, Imagination Technologies 2018-2020

From 2018-2020 I continued to work at Imagination Technologies and was promoted to Research Engineer.

EngD Student, UCL & Imagination Technologies 2014-2018

From 2014-2018 I worked on an EngD project, a collaboration between the Virtual Environments, Imaging and Visualisation Centre at UCL and Imagination Technologies. It focused on novel real-time rendering techniques for AR, particularly on techniques applicable to mobile devices. This was supervised by Prof. Anthony Steed from UCL, and Luke Peterson and Paul Brasnett of Imagination Technologies.

The EngD included a taught MRes component, during which I took courses including Computer Vision, Computer Graphics and Virtual Reality. As part of the VR course group project, we developed an immersive VR application for the CAVE. I passed the MRes component with a distinction and was added to the Dean’s list.

During the EngD I took part in an internship, working for 6 months at the National Institute for Informatics in Tokyo supervised by Prof. Akihiro Sugimoto and collaborating with Diego Thomas of Kyushu University.

BSc Mathematics, MSc Computer Science and Applications, University of Warwick 2009-2013

I completed my BSc in Mathematics at the University of Warwick, obtaining a 1st class degree. I continued on to an MSc in Computer Science and Applications, gaining a distinction and the prize for best overall graduating student. My MSc dissertation focused on techniques for real-time computer graphics in OpenGL.

Contact

Email: david.walton at bcu.ac.uk